Fresh off their third Top500 win for Frontier – now with an 8.4% higher Linpack score – the HPC team at Oak Ridge National Laboratory had some exciting news to share today. Frontier – the first U.S. exascale system and first official Linpack exascale system – has passed its acceptance and is taking on grand scientific challenges.

“Acceptance of Frontier took place at the end of December 2022, and the Frontier HPE Cray EX system fully entered the user program at the beginning of April 2023,” Oak Ridge shared with HPCwire in a statement. “Since then, Frontier has been made available to all of the OLCF allocation programs: INCITE, ALCC, and DD, including ECP. We now have more than 1,000 users with access to Frontier.”

(Respectively, those programs are: the Innovative and Novel Computational Impact on Theory and Experiment program; the Advanced Scientific Computing Research [ASCR] Leadership Computing Challenge; the Director’s Discretionary program; and the Exascale Computing Project.)

When we spoke with Frontier Project Director Justin Whitt last June, he walked us through the steps that were necessary before the acceptance process could even begin. “We’ve got to get all the production software on the system, from the network software, to the programming environments to all that, get it to what we will use when we actually have researchers on the system. Once we have that done, and everything’s checked out, we will start the acceptance process on the machine,” he said.

Exascale Computing Project Director Doug Kothe further discussed how rigorous the acceptance process is: “There’s functionality: do basic things that we need work? There’s performance: are we getting the performance out of the system? Certainly all indications are based on the HPL [High Performance Linpack] run that we are. And then there’s stability and stability is the one that’s most challenging. Essentially, surrogate workloads that mimic actual production workloads are run for weeks on the system. And there’s very specific metrics in terms of the percent of jobs that have to complete and the percent of those jobs that get the right answer, etc. So acceptance is pretty onerous. And so we feel confident that after that period, the machine will be fairly well shaken out for us to get on.”

All that hard work putting Frontier through its paces also translated into an improved score on the new Top500 list, published yesterday in tandem with the International Supercomputing Conference (ISC) in Hamburg. Despite having a marginally smaller peak configuration (0.6% fewer flops, to be precise), Frontier turned in a Linpack score that was 92 petaflops higher than its previous entry, going from 1.102 Linpack exaflops on the November 2022 list to 1.194 Linpack exaflops on the new list. As testament to how big an improvement that is, if those additional flops were dropped into a stand-alone system, it would be sufficient for an 8th place finish on the list.

The fact that the Top500 system configuration is smaller tells you that all that additional Linpack goodness was achieved through tuning, optimizations and – we’ve now learned – frequency adjustments.

We reached out to Al Geist, Chief Technology Officer for the Oak Ridge Leadership Computing Facility (OLCF) and the ECP, to get the scoop on how they squeezed 8.4% more flops out of a (slightly) smaller system and with only a 7% more power (Frontier’s energy-efficiency actually went up slightly).

“Last year Frontier was able to hit 1.1 exaflops with 9,248 nodes, even though we were not running the nodes at their full speed. We had the maximum frequency dialed down about 7%, and we had reduced the maximum power to the GPUs to 500W,” Geist shared by email.

“Since that time, Frontier has become more robust and we now run the GPUs at 560W and at their full frequency for all our users on the system. Additionally, the ROCm libraries are getting more optimizations and the HPE team added their optimizations as well.

“So, when we reran HPL this year, we got the 92 petaflops speed increase because the nodes are running at full speed, because of improvements in the AMD libraries, and because of further optimizations from the HPE team. This result shows that Frontier continues to mature.

“We note that there is still more performance available in Frontier. The latest 1.19 exaflops result used only 9,212 nodes of the 9,472 nodes that are in Frontier,” Geist told us.

What having an exascale machine means for science

“Every one of our [ECP] applications is targeting a very specific problem that’s really unachievable and unattainable without exascale resources,” Kothe told HPCwire when we met with him at ORNL last year. “You need lots of memory and big compute to go after these big problems. So without exascale, a lot of these problems would take months or years to address on a petascale system or they’re just not even attainable.”

Now Frontier has been put into service on a number of research projects that can only be feasibly advanced with a machine of this speed and scale.

As detailed by Oak Ridge, here are some of studies underway on Frontier:

- ExaSMR: Led by ORNL’s Steven Hamilton, this study seeks to cut out the long timelines and high front-end costs of advanced nuclear reactor design and use exascale computing power to simulate modular reactors that would not only be smaller but also safer, more versatile and customizable to sizes beyond the traditional huge reactors that power cities.

- Combustion PELE: This study, named for the Hawaiian goddess of fire and led by Jacqueline Chen of Sandia National Laboratories, is designed to simulate the physics inside an internal combustion engine in pursuit of developing cleaner, more efficient engines that would reduce carbon emissions and conserve fossil fuels.

- Whole Device Model Application (WDMApp): This study, led Amitava Bhattacharjee of Princeton Plasma Physics Laboratory, is designed to simulate the magnetically confined fusion plasma – a boiling stew of charged nuclear particles hotter than the sun – necessary for the contained reactions to power nuclear fusion technologies for energy production.

- WarpX: Led by Jean-Luc Vay of Lawrence Berkeley National Laboratory, this study seeks to simulate smaller, more versatile plasma-based particle accelerators, which would enable scientists to design particle accelerators for many applications from radiation therapy to semiconductor chip manufacturing and beyond. The team’s work won the Association of Computing Machinery’s 2022 Gordon Bell Prize, which recognizes outstanding achievement in high-performance computing.

- ExaSky: This study, led by Salman Habib of Argonne National Laboratory, seeks to expand the size, scope and accuracy of simulations for complex cosmological phenomena, such as dark energy and dark matter, to uncover new insights into the dynamics of the universe.

- EQSIM: Led by LBNL’s David McCallen, this study is designed to simulate the physics and tectonic conditions that cause earthquakes to enable assessment of areas at risk.

- Energy Exascale Earth System Model (E3SM): This study, led by Sandia’s Mark Taylor, seeks to enable more accurate and detailed predictions of climate change and its effect on the national and global water cycle by simulating the complex interactions between the large-scale, mostly 2D motions of the atmosphere and the smaller, mostly 3D motions that occur in clouds and storms.

- Cancer Distributed Learning Environment (CANDLE): Led by Argonne’s Rick Stevens, this study seeks to develop predictive simulations that could help identify and streamline trials for promising cancer treatments, reducing years of lengthy, expensive clinical studies.

“Frontier represents the culmination of more than a decade of hard work by dedicated professionals from across academia, private business and the national laboratory complex through the Exascale Computing Project to realize a goal that once seemed barely possible,” Kothe elaborated in a post published today on the the ORNL website. “This machine will shrink the timeline for discoveries that will change the world for the better and touch everyone on Earth.”

“I don’t think we can overstate the impact Frontier promises to make for some of these studies,” Whitt added. “The science that will be done on this computer will be fundamentally different from what we have done before with computation. Our early research teams have already begun exploring fundamental questions about everything from nuclear fusion to forecasting earthquakes to building a better combustion engine.”

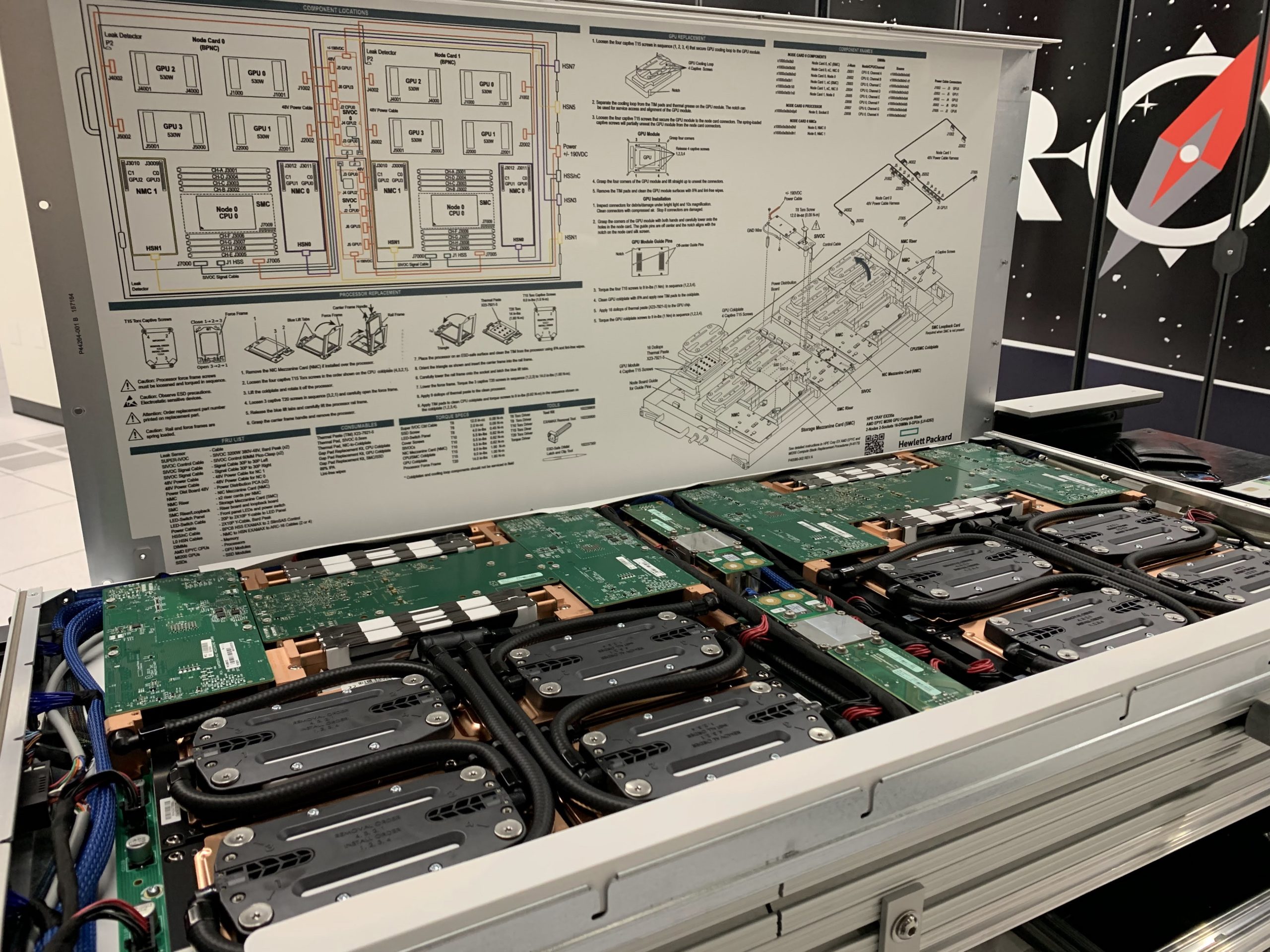

Frontier is an HPE-Cray EX system that spans more than 9,400 nodes, each with one AMD Epyc CPU and four AMD Instinct MI250X GPUs.